Imagine a world where large language models (LLMs) can remember every detail from past interactions, mimicking human memory. This concept of infinite memory is explored by leveraging the power of AI dreams, inspired by the human brain's REM sleep.

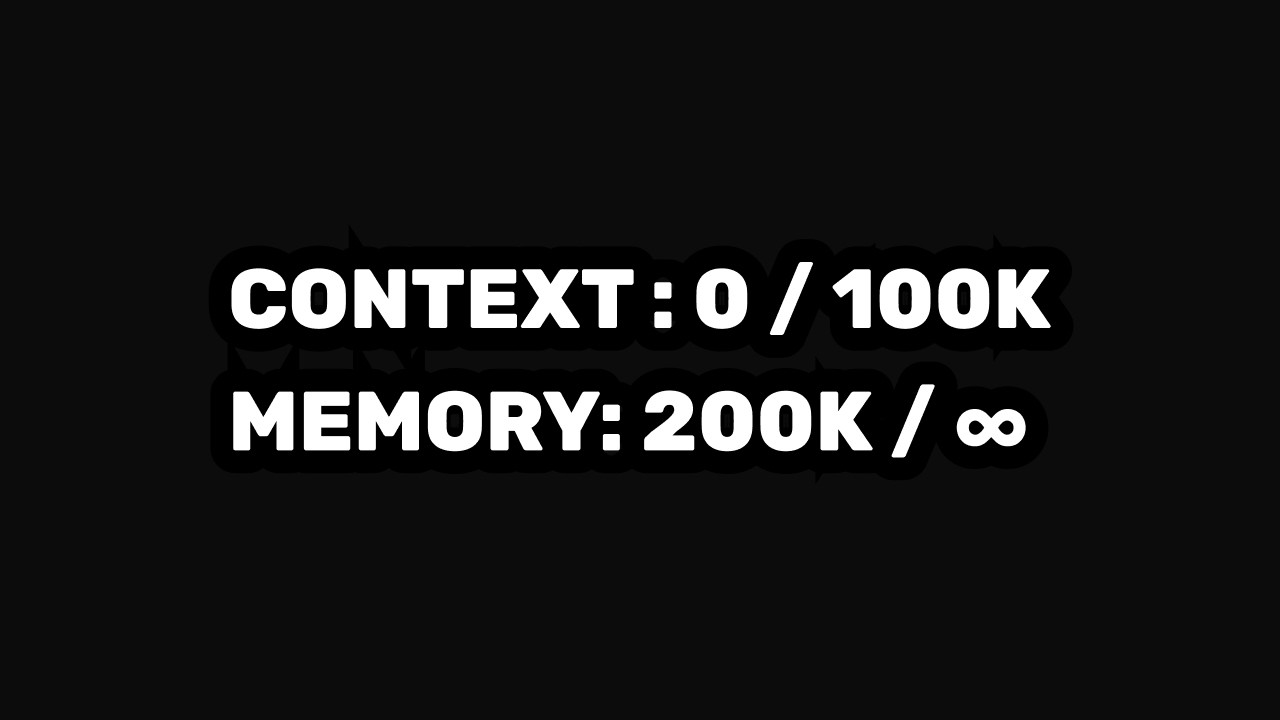

Current LLMs are limited by their context windows, meaning they can only recall information within a set boundary of interactions. While clever methods like retrieval-augmented generation exist, they do not equate to true understanding. The speaker introduces a novel approach, using AI-generated dreams to create diverse training data, allowing for continuous learning.

Learning from Human Brain Patterns

The breakthrough idea comes from studying REM sleep, where dreams help humans integrate new experiences. The speaker references a pivotal paper, "Dream to Predict," which suggests dreams play a crucial role in predictive coding. By emulating this process, LLMs can potentially overcome their current limitations.

Through experimentation, generating dreams from conversations offers a promising solution. Although initial attempts yielded suboptimal results, this innovative method shows potential in pushing the boundaries of AI memory.