Valuable Insights from the Video Transcript

Key Points

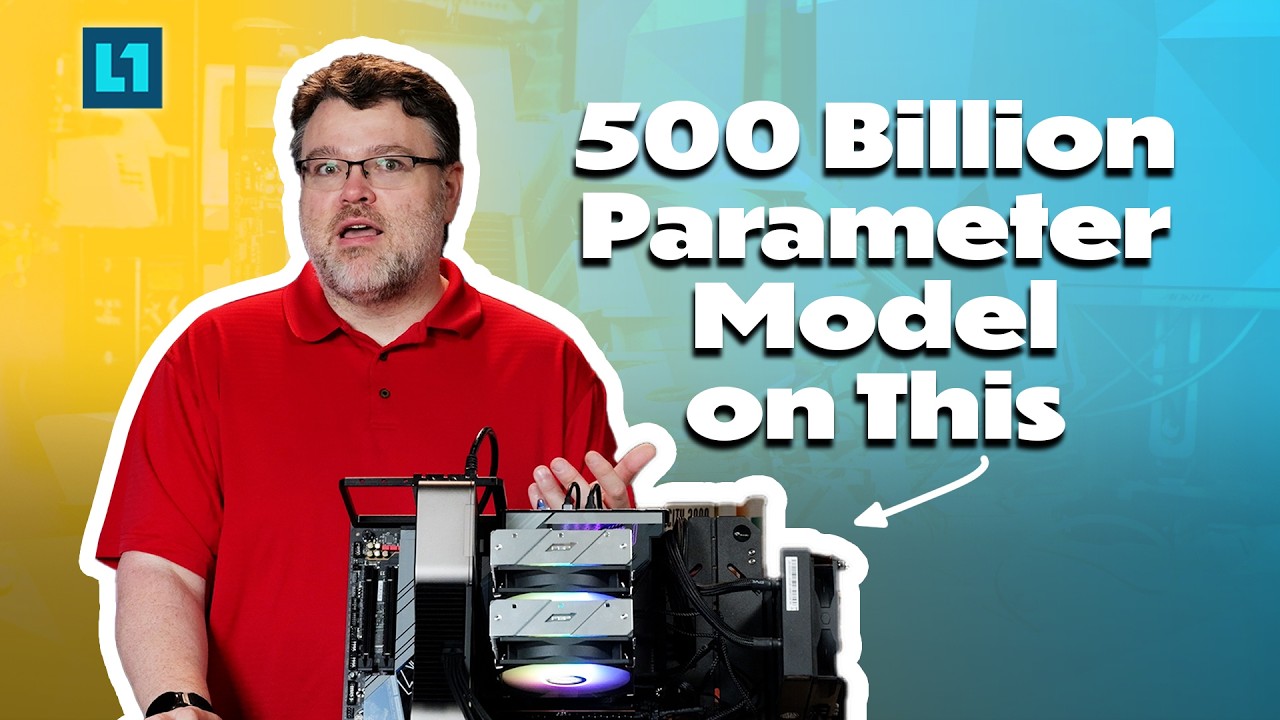

- Running Advanced AI Models Locally: The AM5 system enables users to run a sophisticated 500 billion parameter AI model offline, providing full control without the need for expensive servers or subscriptions. This marks a shift toward localized AI, empowering individuals.

- Hardware Setup: A successful configuration includes an AM5 9800X3D CPU with 128 GB RAM and an RTX 3090 GPU, highlighting the importance of sufficient memory bandwidth for efficient AI processing.

- Quantization and Model Efficiency: The model showcased utilizes quantization, allowing it to operate on modest hardware while maintaining reasonable performance. This approach enables high-functioning AI tools for everyday users.

- Contextual Understanding: The AI’s ability to handle extensive context (up to 48,000 tokens) significantly reduces the risk of hallucination, making it a more reliable tool for answering complex inquiries.

- Community and Collaboration: The presence of a supportive community facilitates shared knowledge, ongoing improvement in AI accessibility, and collaborative troubleshooting, particularly with models tailored for less powerful hardware.

Insights

- User Empowerment: The ability to run potent AI technologies on personal devices represents an unprecedented opportunity for knowledge control and technological decentralization. By equipping individuals with AI tools, the speaker emphasizes the potential for personal agency amidst corporate dominance.

- Optimizing Performance: Understanding the intricacies of hardware requirements and configurations can greatly improve AI functionalities. Specific drivers and setups are essential for maximizing local AI's potential.

- AI as a Thinking Tool: The discussion promotes using AI not merely for task completion but as a cognitive enhancement tool, encouraging critical thinking and deeper engagement with content.

Actionable Advice

- Hardware Configuration: For optimal performance, consider investing in a robust CPU and sufficient RAM, alongside a capable GPU like the RTX 3090 to unlock advanced AI capabilities on local machines.

- Using Context Effectively: When interacting with AI models, provide rich context within queries to improve response accuracy and decrease the likelihood of AI “hallucination.”

- Participating in Community Discussions: Engage in forums and communities focused on AI development to share insights, troubleshoot issues, and discover new models and methods.

- Experiment with Different Models: Explore various quantized models on open platforms like Hugging Face to find the one that best suits individual needs or tasks.

Supporting Details

- Quantization vs. Distillation: This distinction is critical in understanding model size and performance. While quantization retains essential functionalities, distillation provides a way for smaller models to learn from larger ones, facilitating more efficient AI without fidelity loss.

- Future of AI Development: The potential for distributed model training suggests a collaborative future where users can contribute to and enhance AI functionality collectively.

Personal Reflections

The emphasis on user control and community-driven progress resonates deeply with the current technological landscape, where corporate influence often stifles innovation and accessibility. The insights provided encourage a re-evaluation of interactions with technology, promoting a mindset that leverages AI as a partner rather than a mere tool, fostering an environment of continual learning and growth.

Please check out the full video for more insights:

Conclusion

Join us on this learning journey and connect with me on social media!