Transformative Insights from DeepSeek's R1 Model Launch

In January 2025, DeepSeek unveiled its groundbreaking R1 language model, setting new standards in computational efficiency and accessibility. Below, we delve into the essential insights gained from the video "How DeepSeek Rewrote the Transformer [MLA]" by Welch Labs.

Key Points

- DeepSeek's R1 Model Release: DeepSeek significantly outpaced competitors with the launch of R1, which provides publicly accessible model weights and inference code, a remarkable feat among leading models.

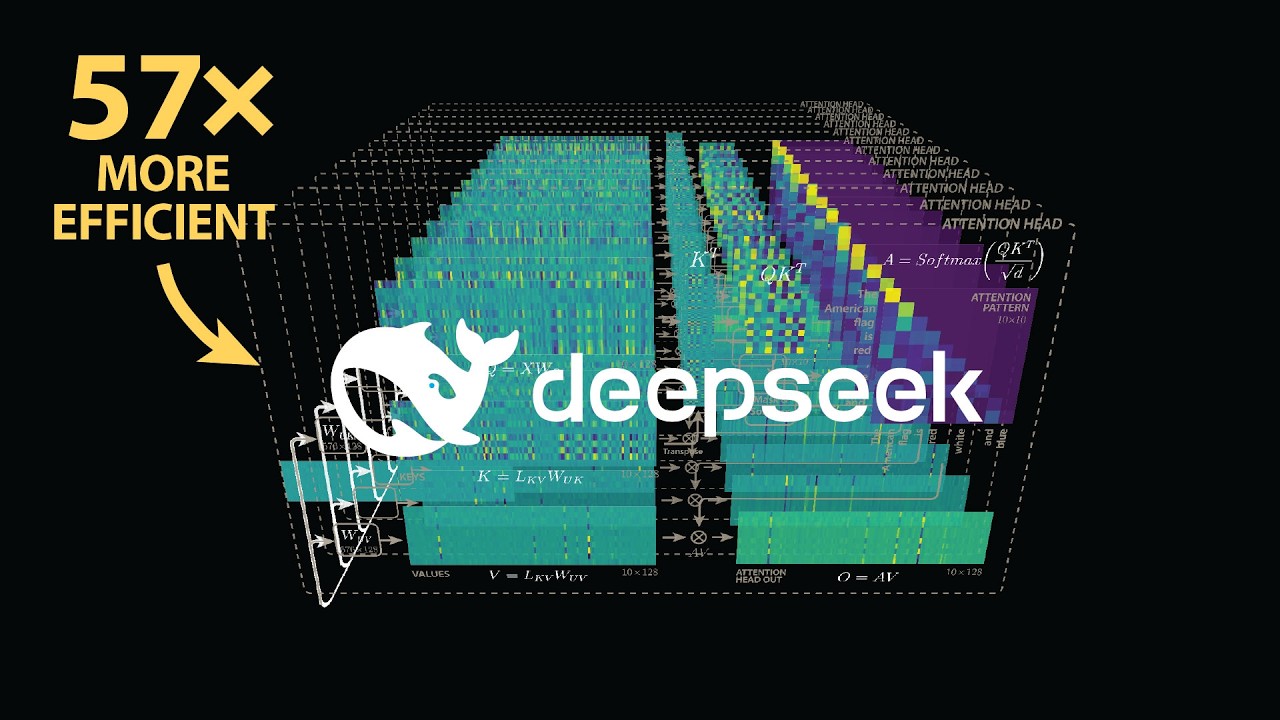

- Multi-Head Latent Attention: This technique reconfigures the core Transformer model, optimizing the key-value cache size by 57 times, enabling text generation over six times faster than traditional Transformers.

- Attention Mechanisms: The video elucidates attention patterns crucial for processing sequences of tokens, showcasing differences in complexity compared to standard models like GPT-2.

Valuable Insights

- Public Access to AI Innovations: DeepSeek’s open-source strategy fosters collaboration, countering the predominance of proprietary development within the AI sector.

- Efficiency in AI: The model's reduced computational requirements spotlight a significant step towards sustainable AI, addressing rising concerns about energy consumption.

Actionable Advice

- Implementing Efficiency Strategies: Organizations developing language models should adopt DeepSeek's approaches for enhanced computational efficiency.

- Exploring Multi-Head Attention: Researchers are encouraged to experiment with increasing the number of attention heads and layers to discover potential performance improvements.

- Adopting Open Practices: Embrace transparency and collaboration in AI projects to support community-driven advancements.

Supporting Details

- The mathematical underpinnings of the attention mechanism illustrate how queries and keys generate attention patterns, essential for comprehending language model operations.

- DeepSeek's innovations could shape both academic research and commercial applications of AI, promoting significant growth across the field.

Personal Reflections

The insights presented on the efficiency of AI resonate deeply with contemporary challenges in balancing performance against resource consumption. DeepSeek's commitment to open sourcing may redefine industry standards, potentially inspiring a community-driven evolution in AI technology.

By pursuing alternative computational strategies, we may uncover transformative changes in AI applications across various sectors.

Watch the Full Video

To gain a deeper understanding of DeepSeek's innovative approaches, check out the full tutorial here:

Conclusion

With the invaluable insights gained from DeepSeek's contributions to the AI field, we can look forward to a future where language models and computational efficiency go hand in hand. Join us on this exciting journey of exploration and growth in AI!

Follow along to join the learning journey and stay connected with us on social media: